The Mind of non-Cartesian Theater

To contextualize my work HUMAN SIMULATION, I organized a Cybernetic Conversation at the Beursschouwburg in March 2017 with Professor Francis Heylighen, head of the Evolution, Complexity, and Cognition research group at the Free University of Brussels and the Global Brain Institute [11]. His main research focus is the evolution of complexity. Two months later, in June 2017, we arranged the first Cybernetic, Algorithmic, Systemic Theater Symposium [22]. Artistic participants: Annie Dorsen, Orion Maxted, Špela Petrič, Miha Turšič, Nathan Fain, Ogutu Muraya, Noah Voelker, Leila Anderson, and Thomas Dudkiewicz. Scientific participants: Prof. Hans Westerhoff (Synthetic Systems Biology, UvA, VU, Amsterdam), Stefania Astrologo (researcher in the epigenetics of cancer) and Prof. Francis Heylighen. Curated by Orion Maxted, moderated by Chris Keulemans, enabled by Frascati Productions. at Frascati Theater, Amsterdam. Both these events brought together artists, theater makers and scientists in the fields of systems biology, cognitive science and cybernetics to build a common language around complex systems through theater experiments, lectures and conversations.

Francis Heylighen describes a complex system as the “spontaneous appearance of global order from local interactions, distributed across all components.” Complex systems/general systems, theory/cybernetics and the related areas of computation propose a paradigm for understanding the world and for spontaneously creating a self-organizing system. These ideas are the underpinnings of the transformative technologies of our lifetime, including biotechnology, the Internet, and ecology–and they have been successfully applied to cognitive science, psychology, sociology, geopolitics, and economics. Moreover, these theories demonstrate a shared systemic basis of understanding that connects all these fields. Arguably, we are living through a revolution or second enlightenment of complex systems. So how does theater relate to these developments?

Cartesian theater and its little spectator

In this text, as in all of my recent work, I hope to demonstrate that theater can have the same principles as complex self-organizing systems. What’s more, I want to argue that theater as an art form is in fact uniquely placed to create such systems. Therefore, it is imperative that we “update” theater to the systemic worldview, so that–through its very operation–theater can reveal the structure it shares with the world and the mind.

During our conversations, Francis Heylighen and I came across the idea of The Cartesian Theater–a metaphor from cognitive science about the mind-body problem that, as we shall see, also indirectly points to complex systems and worldviews, and how they came to be. The invocation of theater in this context is obviously intriguing. In what follows, I will use The Cartesian Theater to try and examine the relationship between theater, the mind, and complex systems.

The metaphor of The Cartesian Theater makes explicit an image some of us have when we think about consciousness. Namely, the perception that input signals, which come from the outside–via our eyes, ears, nose, our sense of touch, and so on–are transferred to the brain (the inside), passing through all the nerves and neurons, until they finally end up at a specific place–a boundary or finishing line–where all the input signals come together on a screen or a miniature theater at the “center” of the brain (which most people locate a few centimeters behind the forehead) where “we,” or rather little versions of ourselves, sit observing all the sensory data that flash by, while we think, make decisions, and send out commands.

That idea is wrong, says Daniel Dennett, the American philosopher who coined the term Cartesian Theater in his book Consciousness Explained to warn us against this way of thinking about consciousness. The problem is that this approach requires a spectator–a Homunculus (literally: little man). That fact raises a lot of questions. Because how does the spectator manage to see? Surely he also needs a little theater inside his head? And that, in turn, would require an even smaller spectator, with an even smaller theater… And so on, and so on, reducto ad absurdum: an infinite regress. The explanation of the way we think has been replaced by another thinking entity–thereby explaining nothing. As Francis Heylighen puts it: “It’s as if a recipe for cake contains the ingredient ‘cake.’” Dennett’s point is that, clearly, this way of thinking about the mind is a mistake. Yet it’s hard for us to imagine consciousness otherwise because the idea of our brain as a “privileged center” is so sticky, seductive and appealing (just like cake).

A very brief history of worldviews

The bigger point Dennett wants to make is that we still hold on to remnants of Cartesian dualism, albeit in a materialist form. This actually affects how we see and act upon the world. To understand this, we need to trace a very brief history of worldviews by following in the footsteps of Gregory Bateson, the British cybernetician and anthropologist who goes back in time in Pathologies Of Epistemology, a chapter of his book Steps To An Ecology of Mind.

Early anthropological records suggest that the pagan religions regarded humankind as being one with its environment. At first, we took clues from our natural surroundings–patterns, animals, stories–and used those as metaphors to understand ourselves: the totemic worldview. Then the relationship seemingly reversed. We started from those stories about our lives and ourselves, and we used them to give meaning to the world. The stars, the rivers, and so on: the animist worldview. What happened next, though, was fatal. First, we separated the idea of the mind from the natural world, thereby arriving at the idea of the gods. Second, philosophers from Greece and India introduced a conception of the world fundamentally composed of separate parts: the atomist worldview.

Orion Maxted

Cut to the 17th century, post-renaissance Europe, where these two worldviews collide: the gods (which by then have come to represent the Christian worldview) versus the atomists (by then synonymous with the reductionist mechanistic worldview). In the middle sits the doubting homunculus himself, René Descartes. Descartes is trying to synthesize these two worldviews. He wants to reclaim epistemological authority from God, yet he worries that the mechanical worldview (which explains animals as automata) leaves no room for a human mind, soul, or free will. So, to cut a long story short, this situation results in the Cartesian dualist worldview, composed of two separate kinds of substance: mind and matter.

From I think, therefore I am onwards, Descartes places mind above matter. He gave us the idea that the brain simply represents reality to the mind, with the brain acting as a kind of mirror of reality. This is known as the Naive Reflection-Correspondence theory of mind. Even though science has stopped believing in dualism (because how can separate mind-stuff and matter-stuff communicate if they are really different stuff?), the separation between mind and brain lives on in a secular materialist way, namely, in the separation Dennett describes through The Cartesian Theater as Cartesian Dualism.

The underlying problem is that we see the world in terms of separation rather than connection, difference rather than identity. This separation, or the idea that the mind is located in a specific place, is a remnant of reductionism–the attempt to explain phenomena by dividing them into separate parts and then determining the properties of each part–and it prevents us from understanding how our minds function, and by extension how the world functions. Or as Bateson puts it: “When you separate mind from the structure in which it is imminent […] you thereby embark, I believe, on a fundamental error, which in the end will surely hurt you.” The idea of a separate “I” unilaterally controlling the body leads quickly to the use of power and coercion over others. It becomes “I” versus “You,” humankind versus Environment. And “the organism that destroys its environment destroys itself,” to quote Bateson again. We see ourselves as separate from the world as if observing it from a distance. We, human beings minus our environment, don’t really see how world events are connected, so crises just seem to appear from nowhere. The last thing we’re able to see is that those problems have their origin in thought, the very thing we thought could solve our problems. But when we change the unit from “I” to “I plus environment,” cooperation becomes the obvious strategy.

Towards a non-Cartesian theater?

Perhaps you’re wondering what this has to do with actual theater? After all, The Cartesian Theater is a metaphor about the brain-mind problem, not about theater itself. Nonetheless, theater has been invoked in this story of consciousness, worldviews, and complex systems, and that is not a coincidence.

Dennett is saying that we ordinarily think about the mind as a theater and that therefore we don’t understand the mind. Does this also work the other way around? In other words: is there an extent to which we actually think about theater as a Cartesian mind? And if so, is this way of understanding theater not in tune with our times in an age of complex systems?

Isn’t theater in effect staging perceptions for a homunculus? Doesn’t it still tacitly hold on to the image of itself as a “mirror,” exemplifying the Naive Reflection-Correspondence theory of knowledge? Isn’t it reinforcing the image of the separate spectator? Doesn’t theater maintain the Cartesian hierarchy of mind over brain (matter) that gives rise to a particular notion of power, with authority passing from the mind of the writer via the passive conduit of theater directly to the brain of the audience? Theater has tried to deal with some of these issues of power and spectatorship before. But perhaps there is something still unquestioned in the very dynamics of spectatorship that regards the spectator as the endpoint of information–something that makes us see the world according to past paradigms? Is the way we see theater, like the way we see the mind, still fundamentally built on remnants of past worldviews?

On the other hand, theater might be ideally placed to ask and answer these very questions–think of its tacit investment in understanding, modeling and modulating the cognition of the spectator; its ability to connect; to embrace difference; to be collaborative; its ability to show its own workings and processes; its ability to improvise (to “survive” on stage is already deeply autopoietic, to use a term from systems biology for systems that spontaneously (re)produce themselves); its capacity to highlight perception and participation; to invite people into its systems and view them from the inside; its capacity to talk about spectatorship, vision, power, systems. Can we imagine a theater that is not allopoietic–i.e. made from the outside by someone or something else, referring to a process whereby a system produces something other than the system itself–but autopoietic? A theater based on difference rather than identity, distributed rather than centralized, based on feedback loops rather than beginnings and endings? Can we imagine a network, like nature, where power and authority are constantly delegated and redistributed through the whole system? Can we imagine theater as a non-Cartesian mind, a complex systems mind?

Language and action distributed through a network of human actors

Dualism or reductionism get us nowhere; we need to understand a system not as located, nor as beginning or ending in any one part, but rather as a whole distributed across many components and their relationships. These relationships mean that the whole has properties that its components lack.

The relationships between the components are more important than the components themselves. That’s because, in systems theory, every component is itself a smaller system, composed of parts and relationships–with each of its parts, in turn, being a smaller system, composed of parts and relationships–and so on. In the end, everything boils down to relationships (of relationships of relationships). The material basis is, therefore, an abstraction in systems theory–it doesn’t matter if something is mind or matter; what counts is the way the relationships are organized. This transcends the mind-brain dichotomy arising from Cartesian dualism. Examples of components in such a complex adaptive system range from proteins, neurons, cells, computers, or companies to people. This last “category” shows that we really can use theater as a substrate for implementing actual complex systems.

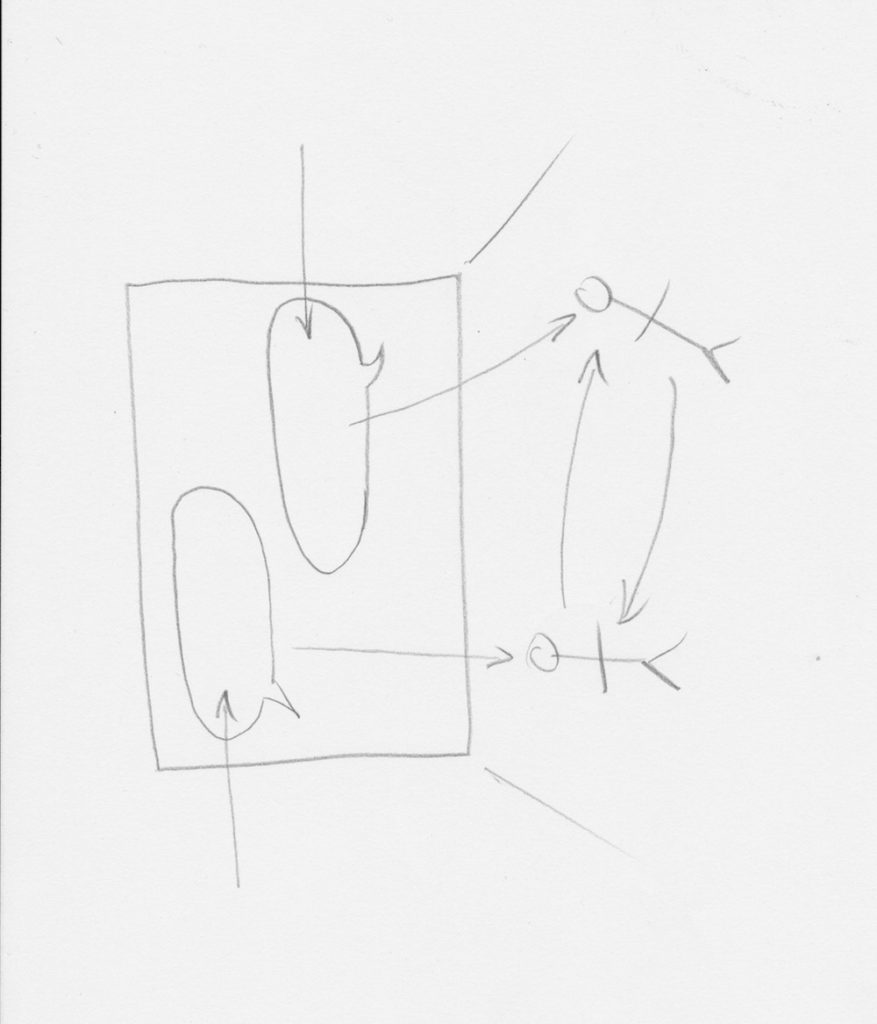

The most basic distinction in systems theory is the boundary between a system and its environment. The environment consists of everything that is not part of the system, but that interacts directly or indirectly with it. The system boundary is always subjective, porous, shifting or shiftable. The relationship between system and environment is conceptualized as input and output. The system responds to input–i.e., a change in its relationship to the environment–as soon as the system detects it. This causes the system to act upon the environment in some way, creating output. The flow from input to output is a process, a transformation.

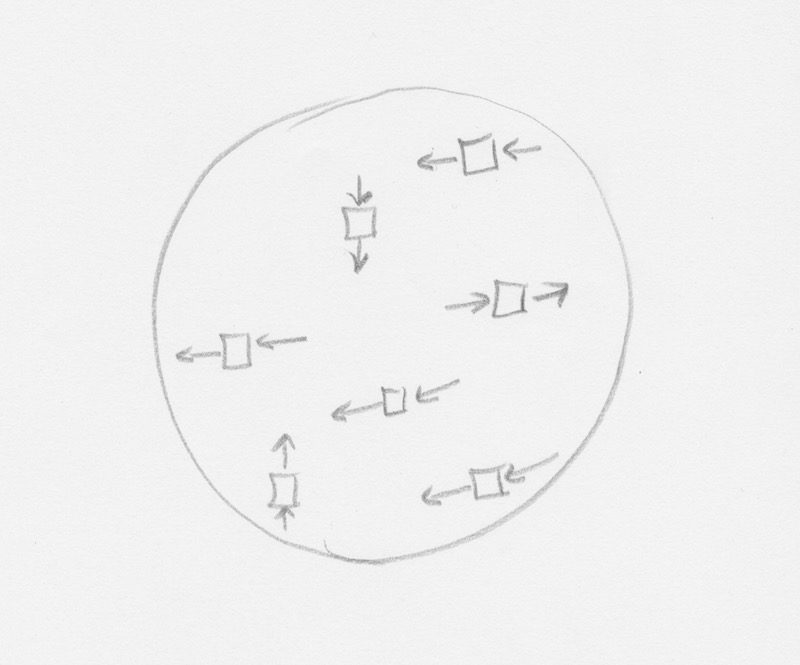

In the kind of theater that I am proposing, the performance represents the workings of a system, while the typically human actors are its components. (They can also be called “agents,” or “nodes.”) Different systems or actors can be joined together when the output of one becomes the input of another. There are three basic ways of coupling systems: sequential, parallel, or circular. When two or more systems are joined, they become a single system. So what we end up with is the image of a network of people, in which language and action are distributed and thus collectively transformed. In short: the equivalent of thought in the brain.

There are many ways of organizing the structure of the network and many different rules, conditions, actions, etc., with which to form the relationships. Some of the most important structures are circular. Simple feedback loops–where more of “this” causes more of “this”–can be explosive, causing the system to become nonlinear and to run away. Feedforward loops on the other hand–where more of “this” causes less of “this”–allow a system to make predictions and to become self-corrective.

The Wisdom of Crowds

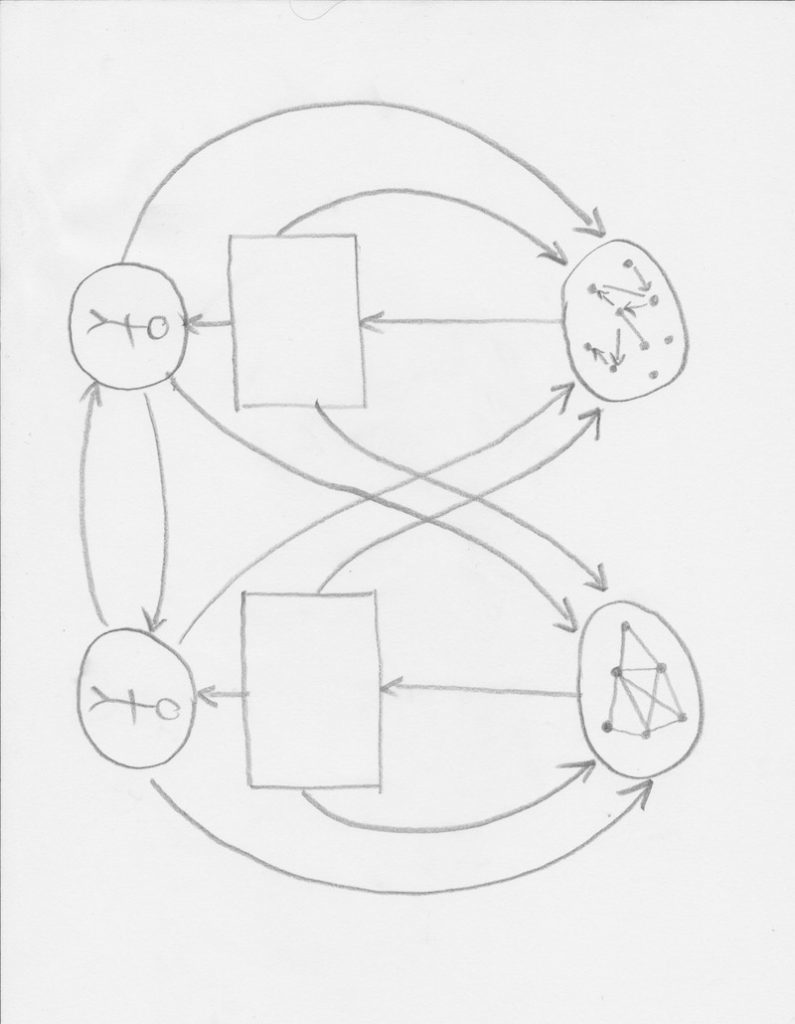

In what follows, I’m going to describe some of the inner processes of The Wisdom Of Crowds(working title)–one of the experiments working along these systemic lines that we presented at the Cybernetic, Algorithmic, Systemic Theater Symposium. The aim of the work is to turn the theater space, the audience, and the actors into a brain that is capable of solving problems and making a performance. To do this, we bring together many ideas from cybernetics, algorithms, and complex adaptive systems. We are trying to attain “the spontaneous appearance of global order from local interactions, distributed across all components.” A text projected onto a screen instructs the audience to take out their mobile devices and to login to a network. The audience discovers that they can write whatever they want, and that their writing is projected on the stage. Then they find out that they can upvote and downvote each other’s writing. The individual audience members are in fact connected through an app, forming a network. The app’s interface is simple and straightforward because we want, first of all, the audience to learn for itself how to use it; and, second, to see patterns emerging out of those local transformations and interactions, leading to a kind of “global order’”–so the audience will connect and become a single thinking entity.

After all, we see that the “brain” is producing “subconscious” ideas which compete for dominance, in order to appear in the shared working space of the stage/”consciousness”–where after a brief moment, they will likely be replaced by content from another audience member. What passes as “consciousness” is simply the module that the whole network picks as the winner at any given time. Furthermore, the audience discovers that they can edit each other’s writing. This brings us to the cybernetic concept of “stigmergy”–a process that communal insects, Wikipedia, protein transcription networks and consciousness have in common and that basically comes down to an agent leaving a trace in the environment (a pheromone trail, a sentence or a protein), which later stimulates another agent to continue that task or to perform a subsequent action. Language in the brain, i.e. “talking to oneself,” functions as interiorized “stigmergy.”

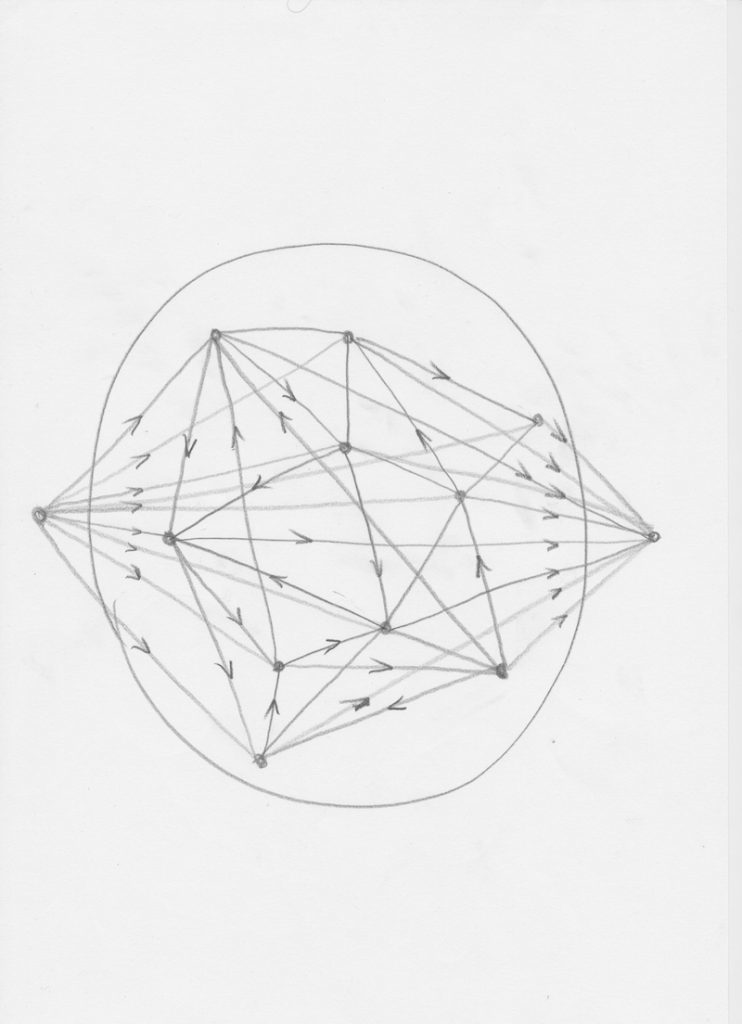

With an audience of 200 people, we have a network with 200 x 199 connections. The system monitors them all. Every time somebody edits or upvotes somebody else, the relationship between them is strengthened. Every time somebody is downvoted, the relationship is weakened. If a thought ends up winning the competition to get into “consciousness,” the connection is doubly strengthened. This means that, over time, the brain learns which are its best connections, its best collaborations. We are therefore in effect building a simple neural net–an algorithm that mimics the connections of neurons found in nature–of the kind that is currently having great success in the areas of recognition and deep learning.

Silicon, computer-based neural nets have been trained to recognize images of cats, for example. Interestingly, programmers discovered they could reverse engineer the neural nets: by asking them to recursively look for cats in images that don’t contain cats, and by slightly enhancing the region that stimulated something in their cat recognition networks, pictures of strange cats’ faces do in fact appear in the image. In The Wisdom of Crowds, the proposition is similar: if a theater audience can recognize a performance, and if we engineer them into a neural net, then they can create a performance.

Next, we divide the network into two sub-networks based on the strongest connections, thus effectively producing two sub-brains. These two brain systems write to each other–they are linked by the desire for communication. At the same time, two actors appear on stage, in front of the texts created by the two audience brains. Soon the audience brains learn that the actors will say or do whatever is written. They are like the bodies or the imaginations of the two brains. The systems are subsequently connected to a larger system that takes up the whole of the theater. Each brain module can use an actor to affect its environment, including the other actor and the whole shared stigmergic space. This, in turn, becomes the input for the second brain-module, which is now activated to respond to the first, creating a feedback loop. Out of the whole mind, a performance emerges.

Moreover, what we notice as we build a complex system theater mind, is that this mind resembles society. Coming back to Bateson:

“Let me list what seem to me to be those essential minimal characteristics of a system, which I will accept as characteristics of mind:

– The system shall operate with and upon differences.

– The system shall consist of closed loops or networks of pathways along which differences and transforms of differences shall be transmitted. (What is transmitted on a neuron is not an impulse, it is news of a difference.)

– Many events within the system shall be energized by the respondent part rather than by impact from the triggering part.

– The system shall show self-correctiveness in the direction of homeostasis and/or in the direction of runaway. Self-correctiveness implies trial and error. Now, these minimal characteristics of mind are generated whenever and wherever the appropriate circuit structure of causal loops exists. Mind is a necessary, an inevitable function of the appropriate complexity, wherever that complexity occurs. But that complexity occurs in a great many other places besides the inside of my head and yours. […] let me say that a redwood forest or a coral reef with its aggregate of organisms interlocking in their relationships has the necessary general structure. […] A human society is like this with closed loops of causation. Every human organization shows both the self-corrective characteristic and has the potentiality for runaway.” (Bateson 1978, 488)

An extended mind

It turns out that “mind” and “complex adaptive system” are synonyms. Theater is a complex adaptive system, hence it is a mind. The units of evolution and mind are the same–they both turn out, in the broadest sense, to be responsible for preserving and developing differences and complexes of differences in networks.

theater = complex system = mind = evolution

Our mind is a model, a small-scale piece of the same pattern that is pervasive in the whole world–not a mirror of that world. And the same can be said about theater.

As a complex system, as a mind, as evolution, theater becomes a looking glass with which to observe, shape, and reflect upon this brave new systemic world–for theater audiences and scientists alike. As a systems biologist, Professor of Synthetic Systems Biology Hans Westerhoff (Free University of Amsterdam) has said: at the cellular level, real biological systems are incomprehensible to the individual human mind. But if we join many systems, biologists, and artists together as an extended mind, then perhaps it becomes possible to build and think about such a system from the inside.

The mystery of consciousness diminishes when we see that it belongs to a network and that, as in theater, the network extends beyond the individual actor or audience member into the whole system. Consciousness is a property of the whole then, collectively created and distributed, transferred onto each part, each individual, who in turn expresses the whole, using the same terms–I, you, we, it.

“Nobody is really in control, so we give up control, then it turns out that what I really want is

what you want.

And I don’t know what you want.

Surprise me.” (Alan Watts)

My special thanks to

Francis Heylighen, whose lecture series Cognitive Systems at the Free University of Brussels has been invaluable in the formulation of this essay – as were our conversations.

Works cited

Francis Heylighen, Cognitive Systems: a cybernetic perspective on the new science of the mind(notes 2014-2015, ECCO: Evolution, Complexity and Cognition, Free University of Brussels).

Daniel Dennett, Consciousness Explained, Little, Brown and Co., 1991.

David Bohm, Wholeness and the Implicate Order, Routledge, 1980.

David Bohm, Thought as a System, Routledge, 1992.

Gregory Bateson, Steps to and Ecology of Mind, University of Chicago Press, 1978.

Alan Watts, Nature, Man, Woman, Vintage Books, 1958.

Francisco J. Varela, Evan Thompson and Eleanor Rosch, The Embodied Mind: Cognitive Science and Human Experience, Massachusetts Institute of Technology, 1993.

James Surowiecki, The Wisdom of Crowds, Doubleday, Anchor, 2004.

This article originally appeared in Etcetera on December 18, 2018, and has been reposted with permission.

This post was written by the author in their personal capacity.The opinions expressed in this article are the author’s own and do not reflect the view of The Theatre Times, their staff or collaborators.

This post was written by Orion Maxted.

The views expressed here belong to the author and do not necessarily reflect our views and opinions.